When business metrics shift unexpectedly, the first instinct is often to question the dashboard or the analyst. In practice, many “mystery spikes” are caused by upstream data changes that quietly ripple through pipelines and calculations. Change propagation analysis is the discipline of predicting and measuring that ripple: if a source table, API feed, or definition changes, which downstream metrics move, by how much, and why. If you have taken a data analytics course, you may have learned how to compute metrics correctly; change propagation adds the missing operational layer—how to keep those metrics trustworthy as the data landscape evolves.

Why upstream changes break downstream metrics more often than teams expect

An “upstream” data source is any system that provides raw inputs: product databases, CRM exports, payment gateways, clickstream events, or third-party enrichment. “Downstream” metrics are the outputs used for decisions: revenue, churn, conversion, fulfilment time, NPS drivers, cohort retention.

Breakages are common because upstream change is normal. Teams rename fields, alter formats, adjust logic, backfill history, or modify event tracking. Even when the change is “correct,” it can invalidate assumptions in downstream models.

Two realities make this costly. First, bad data is expensive at scale—one widely cited estimate pegs the annual cost of poor-quality data in the United States at trillions of dollars. Second, data teams spend significant time diagnosing issues instead of improving products; one survey summary reported teams wasting around 40% of their time troubleshooting data downtime (periods when data is missing, wrong, or partial).

The core idea: treat data dependencies like software dependencies

In software, teams ask: “If we change this service, what breaks?” In analytics, the equivalent is: “If we change this source, what metrics change?” To answer that, you need two building blocks:

- Data lineage (dependency mapping)

Data lineage is a map of where data comes from, how it is transformed, and where it is used. Modern lineage approaches emphasise impact analysis—seeing what downstream assets depend on an upstream object—so teams can assess blast radius before deploying a change. - Change classification (what kind of change is this?)

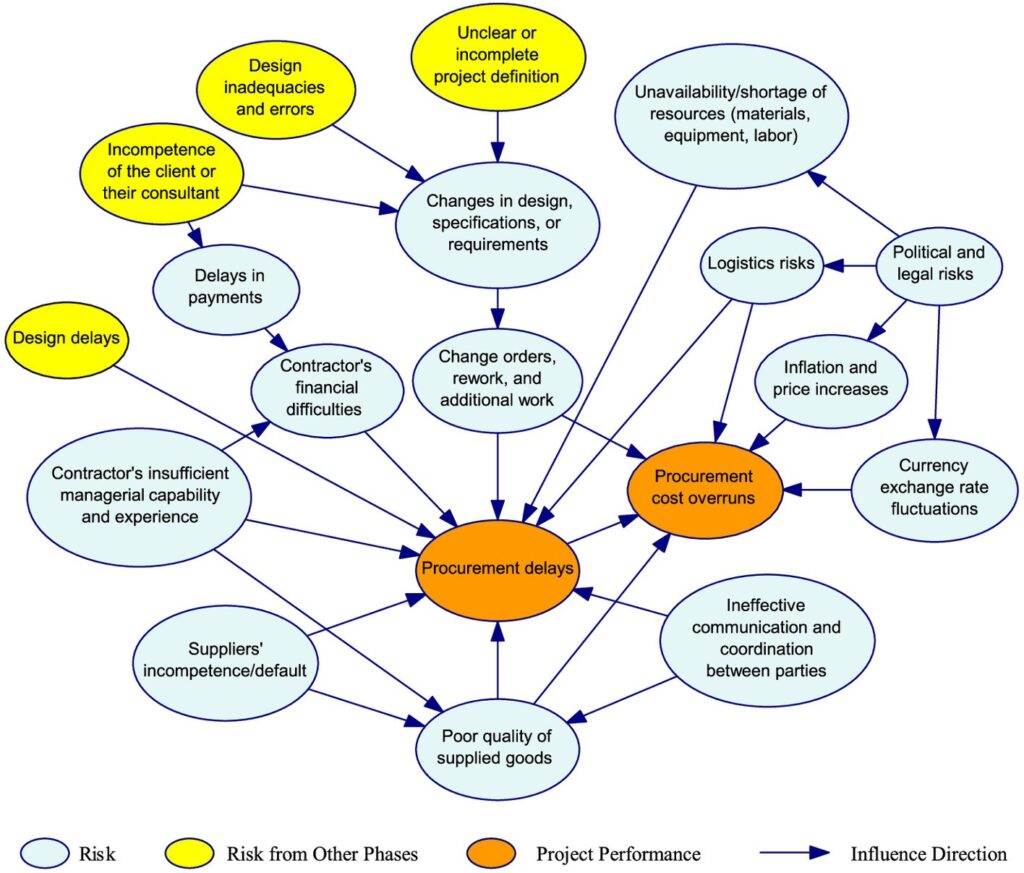

Not all upstream changes are equal. Practical change propagation analysis separates changes into buckets such as:

- Schema changes:fields added/removed/renamed; type changes (string → integer); nullability changes.

- Semantic changes:a column keeps its name, but meaning changes (e.g., “active_user” definition updates).

- Logic changes:deduplication rules, joins, filtering, currency conversion, time zone handling.

- Backfills/reprocessing:historical data is rewritten, which can move period-over-period metrics.

- Quality drift:increased missingness, duplicates, late-arriving events, or unexpected outliers.

Schema changes often cause loud failures (pipelines break). Semantic and logic changes are more dangerous because they can produce “valid-looking” numbers that are wrong.

A practical workflow that keeps metrics stable under change

A workable approach does not require a perfect data catalogue on day one. It requires a repeatable habit:

1) Define the metric contract first

Write the metric definition in plain English: formula, filters, grain (per user/per order), time window, and exclusions. This becomes your “expected behaviour.”

2) Map the dependency chain for that metric

Identify the upstream tables and transformations feeding the metric. Tools that support lineage and impact analysis make this faster, and the underlying principle is simple: you cannot manage change you cannot see.

3) Add automated checks that detect breaking and subtle changes

Automated validation catches issues before they reach dashboards. For example, Great Expectations explicitly frames schema and assumption checks as protection against disruptive upstream change.

Useful checks include:

- Schema checks (field presence, types, allowed values)

- Volume checks (row counts within expected ranges)

- Freshness checks (data arriving on time)

- Distribution checks (sudden shifts in key measures)

4) Use data contracts for producer–consumer alignment

A data contract is a formal agreement between upstream producers and downstream consumers about structure and semantics, not just field names. This reduces surprise changes and forces intentional versioning.

In practice, contracts work best when enforced in CI/CD: a producer cannot deploy a breaking schema update without either a version bump or an approved migration path.

5) Run “metric diff” analysis during changes

Before and after an upstream modification, compare metric outputs on the same historical window. If revenue changes by 2%, you want a traceable explanation: which subset changed, from which transformation, due to what rule.

This is a useful teaching moment in teams as well—whether during internal enablement or in a data analytics course in Mumbai setting—because it turns “dashboard checking” into disciplined impact verification.

Real-world examples where propagation analysis prevents expensive decisions

- Marketing attribution:A change in campaign tagging upstream (new UTM parsing or channel mapping) can shift ROI and CAC overnight. Without impact checks, teams may cut a campaign that is actually performing well.

- Finance reporting:A currency conversion rule update, or a reprocessing of refunds, can alter recognised revenue and margins. Silent changes here can trigger incorrect board-level narratives.

- Product metrics:Event instrumentation updates (e.g., redefining “session” or “active”) can distort retention trends and A/B test outcomes. Lineage plus metric diffing helps distinguish behavioural change from tracking change.

These are not edge cases; they are common failure modes in growing data estates, which is why impact analysis and lineage are frequently highlighted as core benefits of lineage programmes.

Concluding note

Change propagation analysis is not paperwork. It is a practical control system for analytics: dependency visibility (lineage), shared expectations (contracts), and automated detection (tests) wrapped into a repeatable workflow. Done well, it turns upstream modifications from “surprise metric swings” into managed changes with known blast radius and documented effects. The mindset is straightforward: every important metric deserves the same discipline you would apply to production software. That operational habit—often introduced alongside core measurement concepts in a data analytics course and reinforced through hands-on governance practices in a data analytics course in Mumbai—is what keeps downstream decision-making stable even as upstream data inevitably evolves.

Business Name: Data Analytics Academy

Address: Landmark Tiwari Chai, Unit no. 902, 09th Floor, Ashok Premises, Old Nagardas Rd, Nicolas Wadi Rd, Mogra Village, Gundavali Gaothan, Andheri E, Mumbai, Maharashtra 400069, Phone: 095131 73654, Email: elevatedsda@gmail.com.